People shop for outcomes, not items. Retailers should help them get the job done with AI.

- Teresa Sperti

- Mar 31

- 6 min read

Updated: Mar 31

Retail has been organised around products for decades. Categories, departments and individual SKUs shape how retailers build their stores and web experiences, and how customers are expected to navigate them.

That structure makes sense operationally. It’s how inventory is managed; ranges are planned, and website structures are defined.

But it doesn’t reflect how people actually shop.

Many shoppers don’t start with a product in mind. They start with a problem they are trying to solve.

It might be something practical, like how to cook a specific dish, or something more personal, like what to wear to a summer wedding. It could be practical, like choosing the right tool for a DIY project at home. The starting point more often than we think, is “help me do this”.

For years, customers have worked around retail experiences that weren’t designed this way. They searched, clicked, filtered, compared, opened multiple tabs, watched videos, read reviews, and stitched the journey together themselves.

Now AI is starting to support the journey the way shoppers actually behave.

And that is changing the role retail needs to play and how experiences are delivered.

AI is accelerating a shift that was already happening

What’s changing now is that discovery has started to move away from keywords and towards conversations.

Instead of a keyword, it’s a question or a conversation. And those questions and conversations are becoming more specific, are shared in natural language and are typically written with important contextual cues. Recent research from SEMrush shows that over half of consumers now include constraints and needs upfront, whether that’s budget, use case or compatibility, while a third refine their query through back-and-forth interactions.

The way AI interfaces are built naturally also lends themselves to problem solving – as AI can interpret context and meaning as well as being a more dynamic and iterative way to engage vs more traditional channels like search which were static. This shift allows shoppers to enlist the engines to help them with core jobs to be done – and that is now occurring across many categories from food to fashion, travel, health and home.

Common jobs to be done via AI

Grocery → plan meals and shop for occasions Meal planning, dietary needs, recipes, entertaining, building shopping lists.

Fashion → choose what to wear for a situation Outfit selection, event dressing, seasonal styles, fit and suitability.

Beauty → find the right product for my skin, look, or routine Shade matching, skincare for skin type or concern, makeup looks, routines, product compatibility, and how-to guidance.

Travel → plan trips end-to-end Destination ideas, itineraries, timing, accommodation, activities.

Health → understand symptoms and decide next steps Diagnosis guidance, treatment options, product support, when to seek help.

Home → work out how to complete a project How-to advice, materials needed, tool selection, step-by-step guidance.

Gifts → decide what to buy for someone else Ideas by age, occasion, budget, interests.

Fitness / lifestyle → build routines and plans Workout plans, nutrition, goals, equipment needed.

I tested this recently by asking ChatGPT what I should wear to a summer wedding in Italy.

Instead of instantly showing, it asked questions to gain clarity – concierge style. What is the venue? What time is the wedding? What is the dress code? What is my hair and skin tone? Are there any styles I don’t like wearing?

Then came the suggestions. Not just a dress, but shoes and accessories with links to purchase.

It didn’t feel like search. It felt like a personal shopper.

That level of specificity changes what gets surfaced. Product content starts to influence much earlier in the journey, not just at the point of conversion.

The rest of the journey hasn’t disappeared though.

Consumers are still moving across Google, Reddit, YouTube, Pinterest, and TikTok as part of the process. These platforms still play key roles in inspiration and conversion, but AI is helping shoppers’ work through jobs to be done in ways they historically couldn’t.

Retailer experiences evolving in the face to change

If the starting point of the journey is changing, the retail experience needs to change with it. Some retailers are starting to respond in ways that reflect this to ensure their relevance beyond the transaction protected.

Job to be done: outfit selection and style matching

THE ICONIC is investing in more flexible tools that make it easier for shoppers to recreate a look and build outfits around the styles they like. Instead of forcing customers to navigate predefined categories, the experience is starting to adapt to personal style and intent first.

With Snap to Shop, customers can upload an image of an outfit they like, and THE ICONIC will return the closest matching products. The feature combines visual search with AI to interpret the look and surface relevant items quickly.

In testing, the tool was able to accurately match a pleated tailored black pant and cropped cardigan combination from a photo, showing how the experience is shifting from browsing categories to solving the job of decking out my wardrobe with my favourite looks.

At the NORA Retail GenAI Summit in Melbourne, CEO of the Iconic, Jere Calmes suggested if we look 5 years ahead, we may not even see the traditional search bar as a permanent fixture in retail experiences - demonstrating that while AI is changing the experience - there is much to still come as the technology paves the way for a new kind of shopping experience.

Job to be done: what makeup and skincare suits me

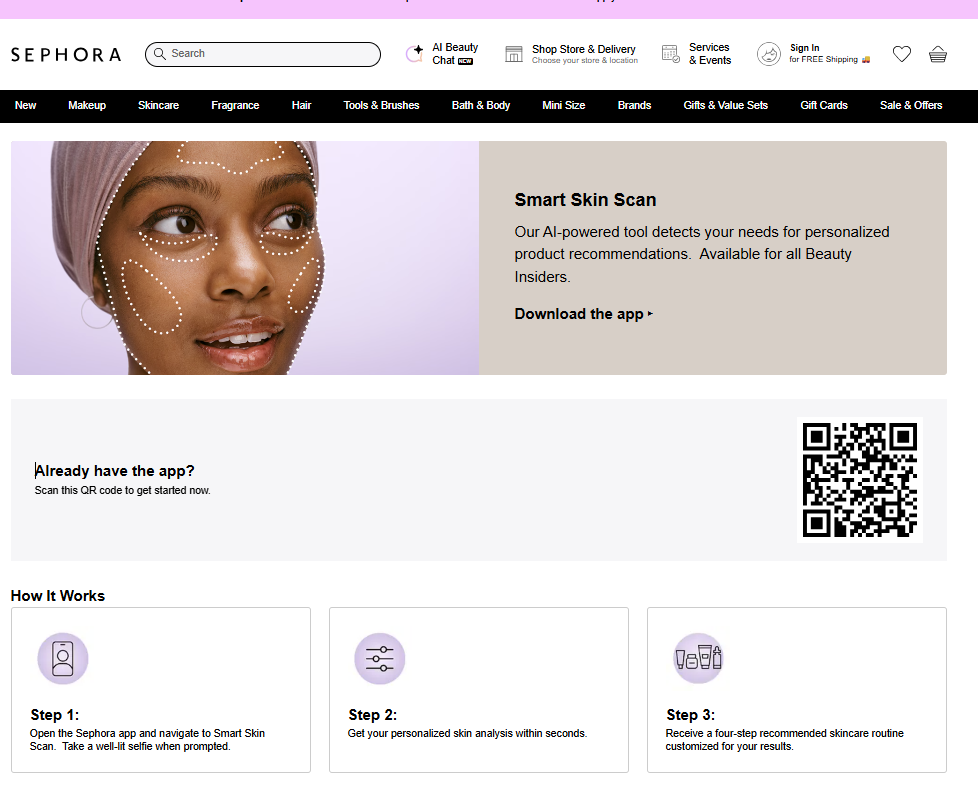

In beauty, Sephora has been building towards this for some time, building an array of tools and features that are AI powered to support the shoppers' jobs to be done.

Virtual Artist uses AR and AI to simulate real time makeup try-ons, mapping products to facial features and adjusting for skin tone. It reflects how people actually shop makeup, starting with a look they want to achieve, whether that’s a smokey eye, contouring or a bold lip, and then building the products around it. For those starting with a specific product, it also provides confidence that it suits their complexion and overall look.

Whilst their Smart Skin Scan AI product is designed to provide an affordable way to identify and address skin care needs without having to visit experts. Smart Skin Scan analyses images uploaded by the shopper and provides a skincare routine tailored to the individuals needs based on 7 key types of issues including fine lines and wrinkles, dark spots, uneven texture, redness, dryness, pores, and blemishes.

Job to be done: complete a DIY project

Home improvement is one of the clearest examples of this. People aren’t shopping for a single product, they’re trying to build something, fix something or improve something.

Just this week Bunnings launched Ask Buddy, expanding its AI capabilities following new partnerships between its parent company Wesfarmers and technology partners including Google and Microsoft.

I asked Buddy to help me to build a cabinet. Buddy asked follow-up questions about the type of cabinet, materials and budget. Instead of simply pointing to existing content, it began shaping the project itself, guiding the conversation towards the tools, materials and decisions needed to complete the task.

It also carries context across the conversation and can integrate signals such as past purchase history, moving the experience closer to an agentic model.

Their latest launch shows earlier learnings from AI endeavours have proved valuable. In December 2025 Bunnings found itself in the media as a result of advice being provided by its Ask Bunnings AI tool - which provided guidance on tasks like rewiring plugs, work that is restricted to licensed professionals in some states.

Rather than completely retreat from AI, Bunnings has utilised these learnings to adapt their approach. Buddy introduces stronger guardrails, making it clearer to the user where a qualified professional is required.

What stands out is the speed of this evolution.

Within a short period of time, the Bunnings experience has moved from static project guides, to chatbot-style retrieval (through Ask Bunnings), to an assistant that actively helps shape the project.

For retailers, that changes the role of the website. It is no longer just a place to search for products, but a place where shoppers can start solving the job they are trying to get done.

Just the beginning of shopping re-imagined

As the examples have illustrated, these are not just new features, they are signals that the centre of the shopping journey is shifting.

The opportunity is not simply to make search better. It is to rethink how the entire experience is designed with jobs to be done at the centre of thinking.

The retailers who win will be the ones who stop organising the journey around products, and start designing it around the problems customers are trying to solve and the outcomes they are seeking to achieve.

Because in an AI-led discovery world, the brand or retailer that helps the shopper figure it out first is far more likely to be the one that ends up in the basket.

Arktic Fox helps Retailers & Brands set up for search in the new era - driving discoverability, shopper stickiness, and long-term growth. Let’s talk about getting your brand seen.